Abstractions and translation

abstractions translations interpretersIntroduction

For the past three weeks, I’ve been building an interpreter for Lisp (more specifically, for a Lisp-dialect I created inspired by Clojure and Scheme). Building a language from the ground up is incredibly fascinating because you get to see in real-time how different abstractions come together.

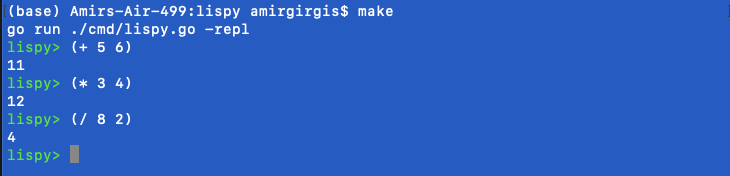

In the beginning my language was just a simple calculator that handled single expressions.

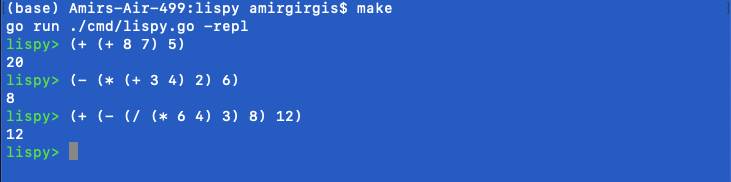

Pretty straightforward, nothing fancy. Then it was a calculator that could handle multiple, nested expressions

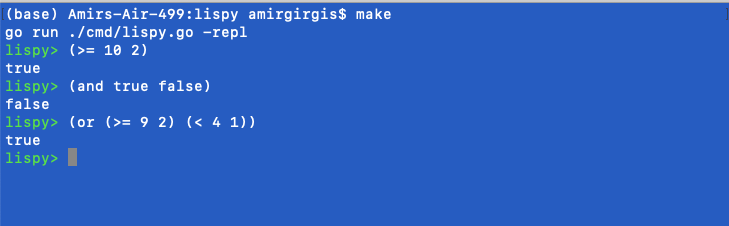

Then it could take on relational and logical operators.

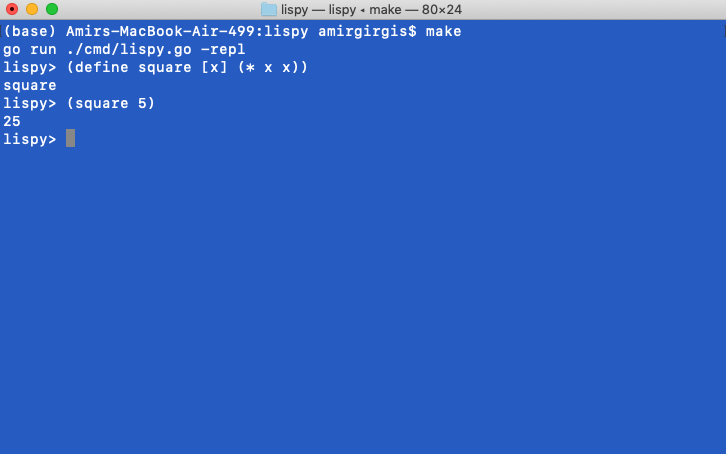

Ok becoming cooler but still more reminiscent of a calculator than a programming language, what about state? That is, saving, calling, and using previous values. Enter variables and functions.

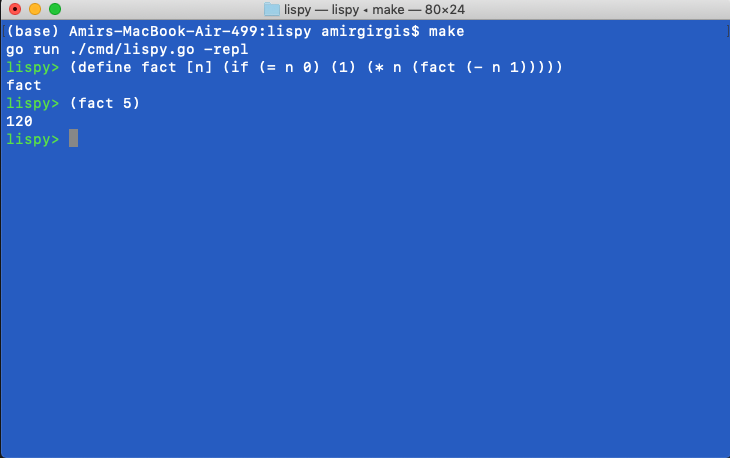

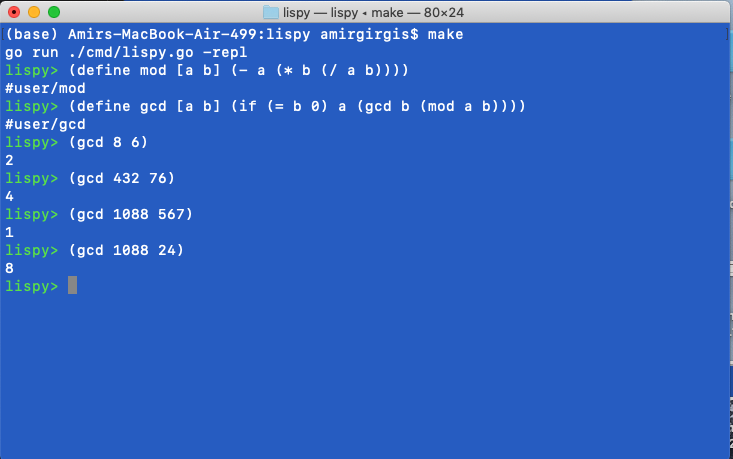

Level by level, I climbed up the ladder of abstraction and watched how the language unfolded. First I was writing functions in Lispy (the name I gave the language inspired by this) then I was writing functions which used other functions then I was writing functions which used other functions which used other functions, and it was off to the races.

Languages and Meaning

3 weeks ago before the interpreter, the rules and syntax governing my language had no meaning (in my little domain). In fact, the rules and grammars on their own were just gibberish, worthless to an outsider who did not know what they represented. They were just letters guided by certain design choices. But the interpreter gave them meaning.

This is pretty profound because we use language so often that I sometimes forget how high up the abstraction ladder we are. Language is powerful because it carries meaning but the syntax of a language itself does not. The syntax becomes a language when it encodes the underlying abstractions it intended to. For example, my interpreter at the start could not recognize that (+ 2 2) was a binary operation that produced some result. Until I gave it the tools to encode this abstraction.

These abstractions are powerful but they’re also just small building blocks. How you construct them and what you do with them is only limited by the imagination of the user who understands these abstractions. This is why we can code, why we write, why we create music, why we talk, why we do any form of communication.

Language is useful to the extent that it encodes information (ideas and abstractions) about the world. The language we use to convey information is thus the bridge between the abstractions we represent and the meaning we convey.

Communication and Translation

I like this analogy because it lends itself nicely to another important question. If a language bridges abstraction and meaning, to what extent is communication just translation?

Code is translated to machine code. Machine code is translated to signals on the transistor-level. Music is translated to sound, which is just vibrations of particles. Ingredients are translated to chemical compounds that we (sometimes) eat. Even language we use to communicate with each other. They’re translated for different countries and dialects. For different cultures and ages. This is even true within a language! Assuming we’re speaking the same language, when I see something I want to communicate, I take the idea or concept or thing I want to represent then encode it or translate into words. You hear these words, then translate or decode the meaning back from them.

Any form of communication can be sequentially boiled down to a translation across domains. The bottleneck of communication then becomes the complexity with which languages in each domain can represent these abstractions.

Closing Thoughts

To leave you with some food for thought, here are some questions I’ve been pondering

- If communication is just translation, how much meaning is lost in the process?

- If language is the tool with which we represent abstractions, what does a richer language (rich in terms of how much information it can represent) than what we currently have look like?